![]() Amy explains 'Attention is all you need'

Amy explains 'Attention is all you need'

Slideshow

Edit

1. Amy’s guiding question

2. The recurrence bottleneck

3. Short paths, stronger learning

4. Parallelism as the design goal

5. A clean encoder–decoder scaffold

6. Encoder: mix across tokens, then refine

7. Decoder: autoregression with a safety rail

8. Attention as Q, K, V

9. Why divide by \(\sqrt{d_k}\)

10. Multi-head attention: many views at once

11. Heads, dimensions, and compute balance

12. Order without recurrence

13. Results: BLEU and practical cost

14. Ablations and the closing claim

Story Setup

1. Amy’s guiding question

Amy, a graduate student, plans her reading-group talk around one question: why abandon recurrence? She frames sequence modeling as a trade between accuracy and practicality, and decides her narrative must explain not just what the new approach does, but why it changes the day-to-day reality of training and deploying models on long, real-world sequences.

Motivation

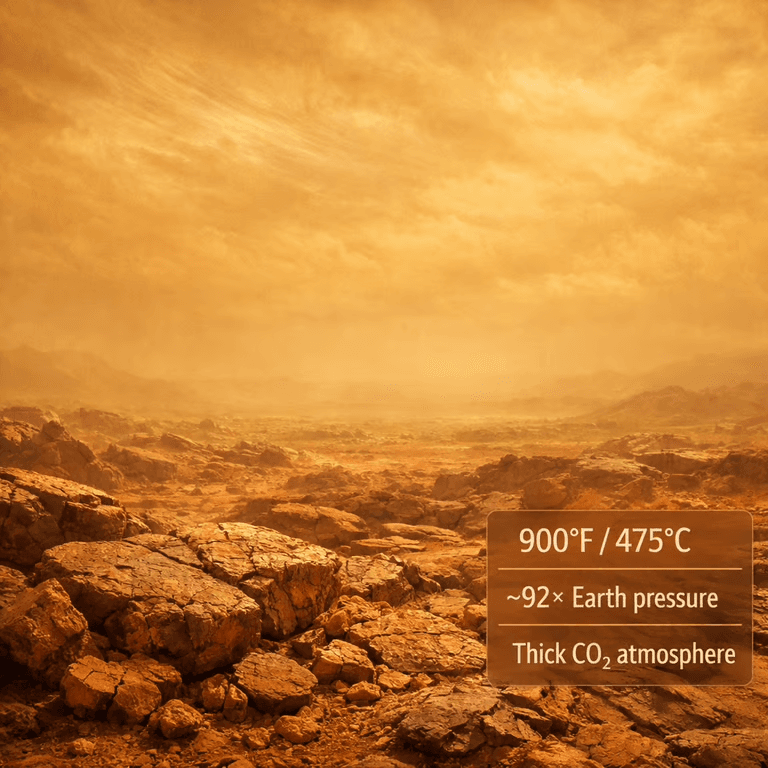

2. The recurrence bottleneck

She starts with the pain point: recurrent models compute states one step at a time, so a single example can’t be fully parallelized. Longer sequences mean higher latency, and bigger batches hit memory limits before they hit throughput. For Amy’s audience, this becomes the core motivation: sequential dependence turns into the dominant cost.

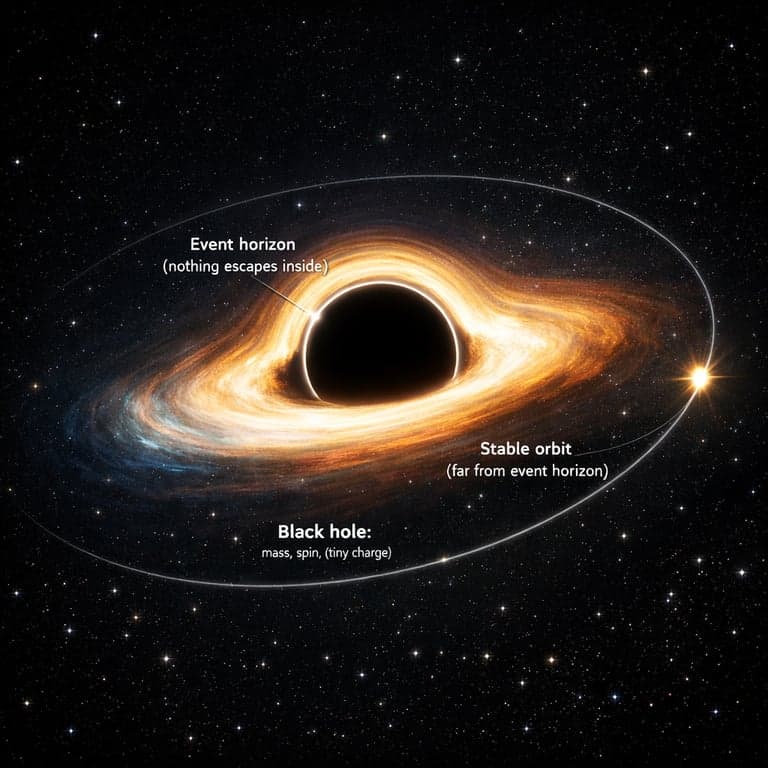

3. Short paths, stronger learning

Amy contrasts “many hops” with “direct contact.” In self-attention, any token can interact with any other token within a layer, so the effective dependency path between distant positions is short. She emphasizes why that matters in practice: shorter paths generally help gradients travel, making long-range structure easier to learn than with deep stacks of stepwise updates.

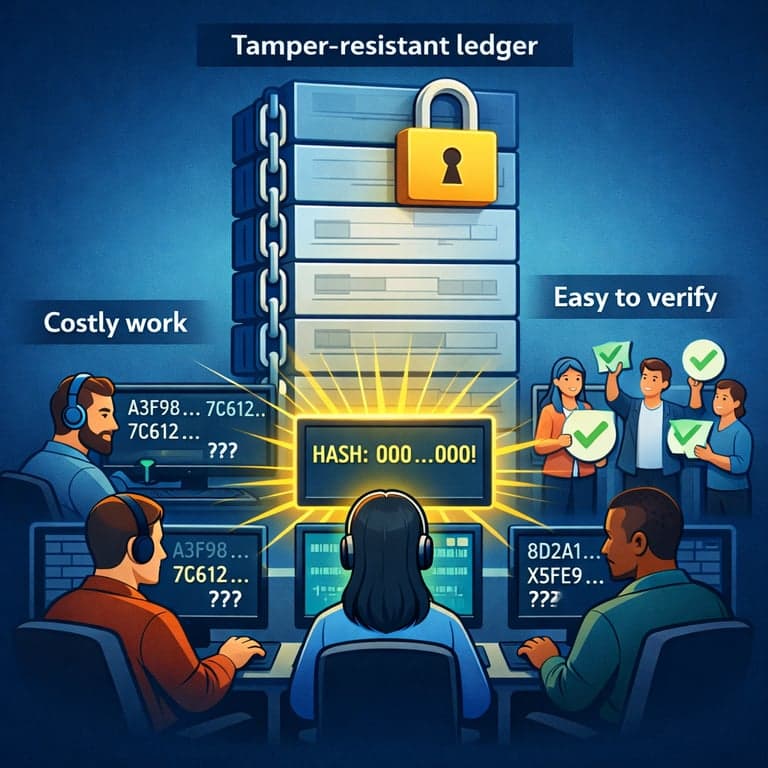

4. Parallelism as the design goal

She explains the architectural bet: if the model can process all positions simultaneously, training time and hardware utilization improve dramatically. Instead of designing around time steps, the model is designed around matrix operations that scale well on GPUs. Amy frames this as turning sequence modeling into a problem of batched linear algebra rather than serialized computation.

Architecture

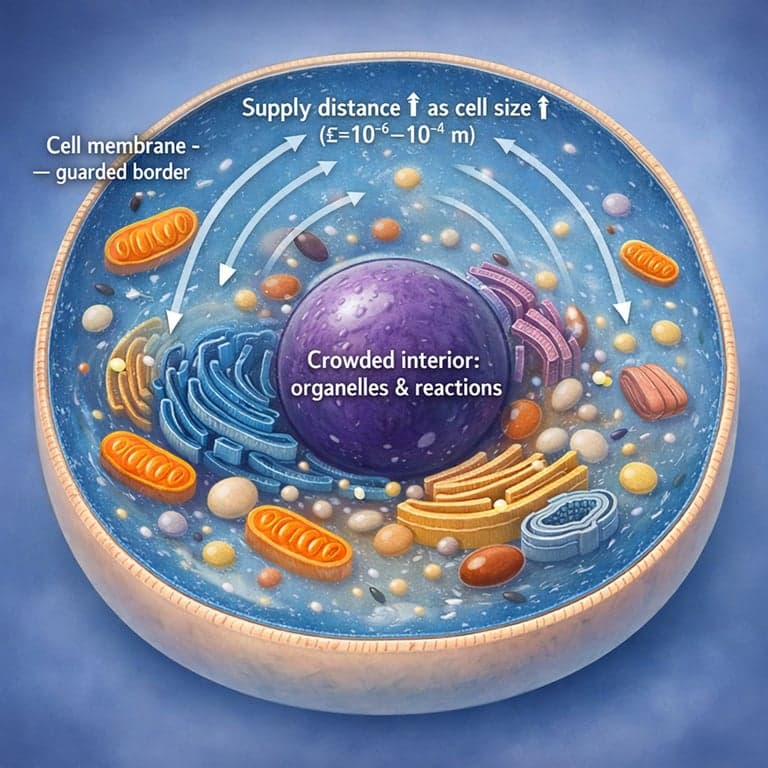

5. A clean encoder–decoder scaffold

Amy introduces the Transformer as an encoder–decoder that keeps the familiar translation pipeline but swaps out recurrence for repeated blocks. Each block is built from self-attention plus a position-wise feed-forward network, wrapped with residual connections and normalization so depth stays stable. The result feels modular: stack layers, keep widths consistent, and train efficiently.

6. Encoder: mix across tokens, then refine

In the encoder, each layer first mixes information across the input sequence using self-attention, then refines features at each position using the same small MLP applied independently per token. Amy highlights the separation of roles: attention handles cross-token communication, while the feed-forward sublayer increases per-token representation power without needing extra sequence steps.

7. Decoder: autoregression with a safety rail

Amy then walks through the decoder: it mirrors the encoder but adds encoder–decoder attention so output tokens can consult the encoded input. The key guardrail is causal masking—each position can only attend to earlier outputs—so generation remains autoregressive. She describes it as letting the model “look back, not ahead,” while still using parallel computation during training.

Attention Mechanics

8. Attention as Q, K, V

Loading equations

9. Why divide by \(\sqrt{d_k}\)

Loading equations

10. Multi-head attention: many views at once

Loading equations

11. Heads, dimensions, and compute balance

Amy clarifies why splitting into heads doesn’t explode cost: if the model keeps total width fixed, each head operates on smaller vectors, so total computation stays comparable to a single large attention. The payoff is qualitative: different heads can specialize, reducing the pressure for one attention map to average over many relationships, which often improves modeling of syntax, alignment, and context.

Positional Information

12. Order without recurrence

Loading equations

Results & Ablations

13. Results: BLEU and practical cost

Amy closes the loop with translation outcomes: the model achieves strong WMT14 BLEU improvements and does so with notably reduced training cost compared with prior approaches that relied heavily on sequential computation or expensive ensembles. She frames this as the practical win—better quality is important, but better quality that trains faster is what changes what labs can iterate on.

14. Ablations and the closing claim

To finish, Amy highlights what the ablations teach: performance depends on choices like the number of heads, per-head dimensions, dropout, and how positional information is represented, but the throughline is consistent. Self-attention shortens dependency paths while removing per-step recurrence, enabling faster training and strong accuracy—her concluding argument for why this design becomes a new default.